Mark Tansey, “Coastline Measure” (1987)

The pollsters got it wrong again, just as they did with the Brexit vote and the Colombia peace vote. In each case, they incorrectly predicted one side would win—Hillary Clinton, Remain, and yes—and many of us were taken in by the apparent certainty of the results.

I certainly was. In each case, I told family members, friends, and acquaintances it was quite possible the polls were wrong. But still, as the day approached, I found myself believing the “experts.”

It still seems, when it comes to polling, we have a great deal of difficulty with uncertainty:

Berwood Yost of Franklin & Marshall College said he wants to see polling get more comfortable with uncertainty. “The incentives now favor offering a single number that looks similar to other polls instead of really trying to report on the many possible campaign elements that could affect the outcome,” Yost said. “Certainty is rewarded, it seems.”

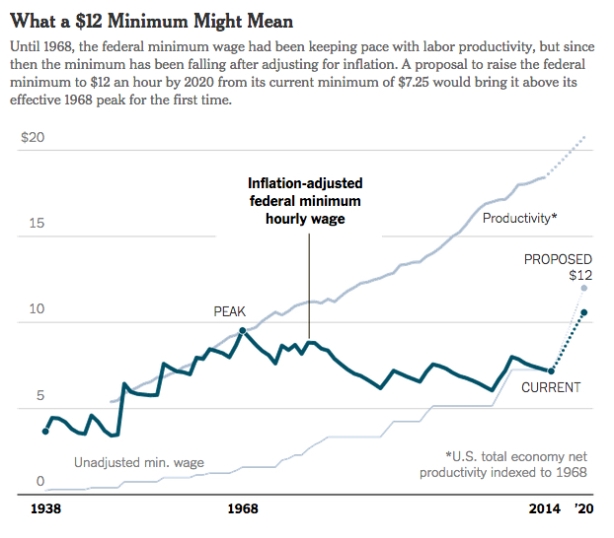

But election results are not the only area where uncertainty remains a problematic issue. Dani Rodrik thinks mainstream economists would do a better job defending the status quo if they acknowledged their uncertainty about the effects of globalization.

This reluctance to be honest about trade has cost economists their credibility with the public. Worse still, it has fed their opponents’ narrative. Economists’ failure to provide the full picture on trade, with all of the necessary distinctions and caveats, has made it easier to tar trade, often wrongly, with all sorts of ill effects. . .

In short, had economists gone public with the caveats, uncertainties, and skepticism of the seminar room, they might have become better defenders of the world economy.

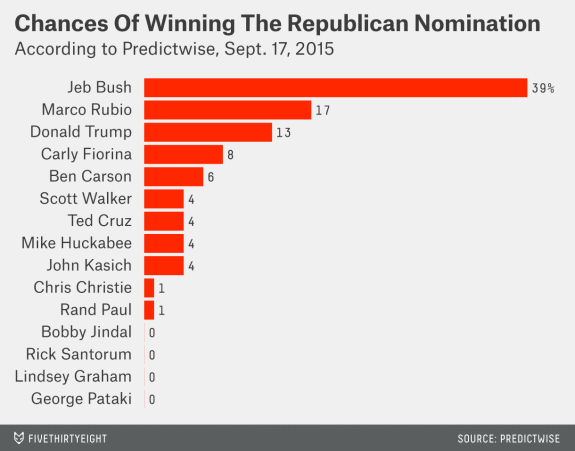

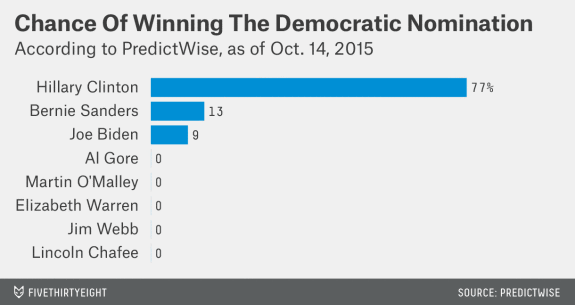

To be fair, both groups—pollsters and mainstream economists—acknowledge the existence of uncertainty. Pollsters (and especially poll-based modelers, like one of the best, Nate Silver, as I’ve discussed here and here) always say they’re recognizing and capturing uncertainty, for example, in the “error term.”

Even Silver, whose model included a much higher probability of a Donald Trump victory than most others, expressed both defensiveness about and confidence in his forecast:

Despite what you might think, we haven’t been trying to scare anyone with these updates. The goal of a probabilistic model is not to provide deterministic predictions (“Clinton will win Wisconsin”) but instead to provide an assessment of probabilities and risks. In 2012, the risks to to Obama were lower than was commonly acknowledged, because of the low number of undecided voters and his unusually robust polling in swing states. In 2016, just the opposite is true: There are lots of undecideds, and Clinton’s polling leads are somewhat thin in swing states. Nonetheless, Clinton is probably going to win, and she could win by a big margin.

As for the mainstream economists, while they may acknowledge exceptions to the rule that “everyone benefits” from free markets and international trade in some of their models and seminar discussions, they acknowledge no uncertainty whatsoever when it comes to celebrating the current economic system in their textbooks and public pronouncements.

So, what’s the alternative? They (and we) need to find better ways of discussing and possibly “modeling” uncertainty. Since the margins of error, different probabilities, and exceptions to the rule are ways of hedging their bets anyway, why not just discuss the range of possible outcomes and all of what is included and excluded, said and unsaid, measurable and unmeasurable, and so forth?

The election pollsters and statisticians may claim the public demands a single projection, prediction, or forecast. By the same token, the mainstream economists are no doubt afraid of letting the barbarian critics through the gates. In both cases, the effect is to narrow the range of relevant factors and the likelihood of outcomes.

One alternative is to open up the models and develop a more robust language to talk about fundamental uncertainty. “We simply don’t know what’s going to happen.” In both cases, that would mean presenting the full range of possible outcomes (including the possibility that there can be still other possibilities, which haven’t been considered) and discussing the biases built into the models themselves (based on the assumptions that have been used to construct them). Instead of the pseudo-rigor associated with deterministic predictions, we’d have a real rigor predicated on uncertainty, including the uncertainty of the modelers themselves.

Admitting that they (and therefore we) simply don’t know would be a start.